We have never been dependent on AI as much as we are today and seeing how invested we are in this field, our dependence on it is only going to increase. It has revolutionized the fields of medicine, transportation, government, investment banking, manufacturing, and has made possible some incredible technologies like self-driving cars, virtual assistants, etc. But, do we understand how an AI system makes predictions? And can we be certain that AI systems in use are making good decisions without bias? Is it really necessary to understand them given their complexity?

Why should we care about Explainability?

We sometimes hear reports about AI systems failing and causing business risk. For example, IBM’s Watson cancer treatment tool gave unsafe recommendations for treating cancer patients, according to its internal documents. Microsoft’s AI chatbot Tay which grew from its efforts to improve conversational understanding started making racist comments within a day after trolls on Twitter taught it offensive speech. Apple’s Face ID face recognition system on iPhone X is fooled using a 3D printed mask. Tesla’s Model X crashed in California killing the driver while on Autopilot. There are also many other cases in the fields of law enforcement, recruitment, investment banking where AI systems have given unfavorable results.

Figure 1. Microsoft's AI chatbot Tay made racist tweets

In a more recent case, Apple launched its credit card which was heavily criticized for offering up to 20 times higher credit to men than to women with very similar incomes and credit histories. Concerns were raised that the AI system might be gender-biased, but the company could not furnish an explanation as to why it happened. In its statement, Apple said gender wasn’t even an input variable to the system as laws prohibit it. But there is a possibility that another variable is highly correlated with gender(for example, presence of credit card from a women’s clothing company) which might act as a proxy for gender. In any case, people were disappointed that there was no transparency in the system.

Figure 2. Apple's credit card

In all the above cases, investigations were done post-hoc and explanations were given after the fact. Many argue that this approach does not capture the truth of the decision process as they are just justifications for results observed. If a different result was obtained, then a different fitting justification might have been given! A better scenario would be to have AI systems provide explanations through the decision-making process leading to the observed result. This is what XAI systems hope to achieve.

XAI gives the following benefits:

- Improve human readability

- Justify the decisions made

- Avoid discrimination and societal bias

- Facilitate improvements

- Eliminate Overfitting

Why is AI difficult to explain?

The earliest developed algorithms we use in AI have deep roots in statistics. They are simple models (think of regressions) with fewer parameters and their behaviors can be explained easily as the model weights are directly correlated with how much of variance in the data each feature can explain. But the real-world problems usually have complex non-linear mappings between predictors and response and require complex models to capture this. As the complexity of the AI systems increased, so did their performance, but at the cost of interpretability. Now, with the advent of Deep Learning, models have millions of parameters and it is impossible to trace how individual input features affect the predictions which is why they are called Black Box algorithms.

Figure 3. Accuracy vs Explainability

Neural Networks are so good at performance because of the way they process data. They neither make any assumptions about the data nor do they require engineered features. They learn both of these parallelly by backpropagation - an algorithm in which the model learns the best features and model parameters over thousands of iterations. Gradients flow from the loss function through millions of neurons and it becomes practically impossible to trace and explain them. But it leads to a low-bias high performing model, that we have to use at the cost of not knowing how it works.

Figure 4. A Deep Neural Network architecture

Due to their complexity, DNNs exhibit unique behavior. Since the output is an ensemble of individual neurons that have learned to identify specific local features, they can be fooled to give incorrect results. Szegedy et al. [1] in their paper discuss this phenomenon wherein small perturbations not perceptible by humans can be applied to an image to fool the model into misclassifying it. Although these targeted perturbations are unlikely to occur naturally, it shows why some outcomes can be really hard to explain.

Figure 5. Small perturbations are added to the images to fool the model into thinking they are

ostriches. These perturbed images consistently fool other object detection models as well.

What kind of explanations should we seek from AI?

Understanding every aspect of an AI system is impractical. Even if one were to try and explain these models, in many cases, the investment in time and money to achieve it is not justified by the value it brings. Just as an ML model has a task and performance requirements, designers need to set desired explanations and incorporate them into the model building process. For example, Paudyal et al. [2] discuss an AI application that helps people learn sign languages. If a student gets the sign wrong, the app not only tells the user that it was incorrect but further explains what aspect of it was wrong like facial expression, location of the sign, movement, orientation, etc. They achieve this by training separate models for each of the components in a waterfall architecture and aggregating them to give the response.

Figure 6. A sign language learning system that gives contextual feedback to users

Other factors to consider are the impact of the system to its users, adhering to the laws pertaining to the transparency of the decision-making process, and to prevent the system from learning societal bias in certain applications. Self-driving cars need to be able to explain what caused them to drive a certain way so that errors in judgment can be detected during the development process. Government regulations like GDPR in the EU and the Algorithmic Accountability Act in the US require businesses to conduct AI impact assessments, disclose the methodology and data used in AI models, and understand the adverse impacts of any algorithmic decisions. In the above example of Apple credit cards, the system needed to explain how different factors led it to arrive at specific figures of credit lines to the applicants. This makes it easier for companies to monitor if the AI systems are biased against people of certain demographics. Other fields like medical diagnosis, trading, banking, recruitment, etc certainly need explainable decisions because of their impact on people. Bias and fairness of AI systems are aspects that have called for more attention in recent times.

Figure 7. An XAI model

How can we achieve XAI?

Several approaches are being considered to achieve XAI. One suggestion is that we could train a separate model that ran parallelly with the predicting system and gave step-by-step explanations. But this could again lead to post-hoc explanations as the model could learn to map the results to explanations. Another popular opinion is to integrate the interpretability into the design process. It should be addressed early on and as the model is being built, explanations for the intermediate observations must be included. Often, performance and explainability counter each other, so it is important to set strict explanation expectations. It is, of course, easier said than done, but the following papers discuss explainability in the Computer Vision field.

Yosinski et al. [3] in their paper discuss understanding DNNs through visualizations. They used backpropagation to produce images that maximized the activations of an intermediate layer for a given class. The images generated in figure 8 show what features these neurons were looking for detecting a particular object class. Zeiler et al. [4] in their paper show how different layers in an Object Detection model learn to detect certain features of the object. Initial layers detect fine features like edges, corners, etc, while larger and larger patterns are detected as we go to the deeper layers as seen in figure 9. We can see that these models have an inherent decision-making mechanism that builds up from input to output as more and more evidence is gathered at every stage. Outputs from such intermediate layers can be extracted to explain the output in a step by step manner. Similar methods can be used to explain results in other domains.

Figure 8. Images that produce maximum class activations in an intermediate layer of a DNN

Figure 9. The figure shows how different layers learn image features for object detection.

The first few layers only look for fine features like edges while deeper layers learn to identify

patterns in different classes of objects

We can even visualize the data directly to get a better sense of how an AI system might give predictions. Dimensionality Reduction is a great technique to visualize important features in high dimensional data. Again this can be used only in some fields and novel ideas need to be developed for obtaining XAI in different domains.

Current state of XAI

Many companies are investing in XAI as decision-makers are seeking explanations from their AI models. In fact, 68 percent of business leaders believe that customers will demand more explainability from AI in the next three years, according to an IBM Institute for Business Value survey.

Google Cloud offers XAI in some of its products and lets users visually investigate model behavior using the 'What If' tool. It gives scores explaining how different factors contributed to the end result. It also helps in continuous evaluation and optimization of the model.

IBM's AI Explainability 360 is an open-source toolkit of state-of-the-art algorithms that support the interpretability and explainability of machine learning models. Its aim is to determine the major factors that influenced the decision, uncovering issues like inherent bias.

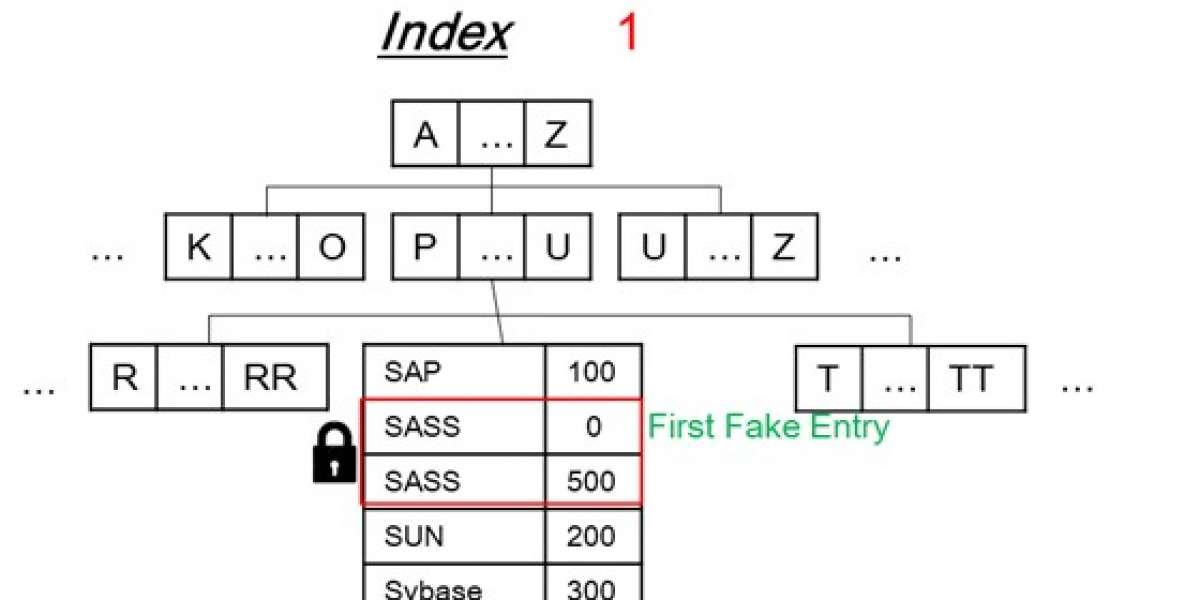

Fig 10. IBM's AI Explainability 360 model hopes to explain the decision-making process via

decision trees

Tableau recently launched a new feature Explain Data to understand the ‘why’ behind a data point. It evaluates many explanations and provides a focused set of explanations.

Darwin AI’s products probe into the neurons of a DNN and create highly compact models without affecting the performance by a process known as 'Generative Synthesis'. Its explainability toolkit offers tools for network performance diagnostics, particularly helpful for network debugging, design improvement, and addressing regulatory compliance.

Kyndi is a text analytics company whose NLP platform scores the provenance and origin of each document it processes while helping organizations quickly find difficult-to-locate information within collections of documents. This provides organizations with an auditable trail of reasoning if they need to explain their output to stakeholders or regulators.

DARPA started an XAI project to incorporate explainability to its future AI-based defense systems.

Many other companies like Factmata, Logical Glue, Flowcast, Imandra, etc are building XAI systems to address the interpretability of AI in different domains.

What's next?

As it is true for any new technology, the first step is voicing the concerns. We are seeing a trend of big companies and new startups use XAI alike not only to adhere to government regulations but also to attract customers via innovative explainable solutions. Academia and research are shifting their focus towards explaining existing AI technologies. As we can see in the Gartner Emerging Technologies Hype Cycle in figure 11, XAI is on the right track in the hype curve and is expected to reach the plateau of productivity in the next decade.

Figure 11. Gartner's Technology Hype Curve

References

[1] Christian Szegedy, Wojciech Zaremba, Ilya Sutskever, Joan Bruna, Dumitru Erhan, Ian Goodfellow, Rob Fergus. Intriguing properties of neural networks. arXiv:1312.6199

[2] Prajwal Paudyal, Junghyo Lee, Azamat Kamzin, Mohamad Soudki, Ayan Banerjee, Sandeep K.S. Gupta. Learn2Sign: Explainable AI for Sign Language Learning. Impact Lab, Arizona State University, 2019

[3] Jason Yosinski, Jeff Clune, Anh Nguyen, Thomas Fuchs, and Hod Lipson. Understanding Neural Networks Through Deep Visualization. ICML Deep Learning Workshop 2015

[4] Matthew D Zeiler, Rob Fergus. Visualizing and Understanding Convolutional Networks. arXiv:1311.2901