Introduction

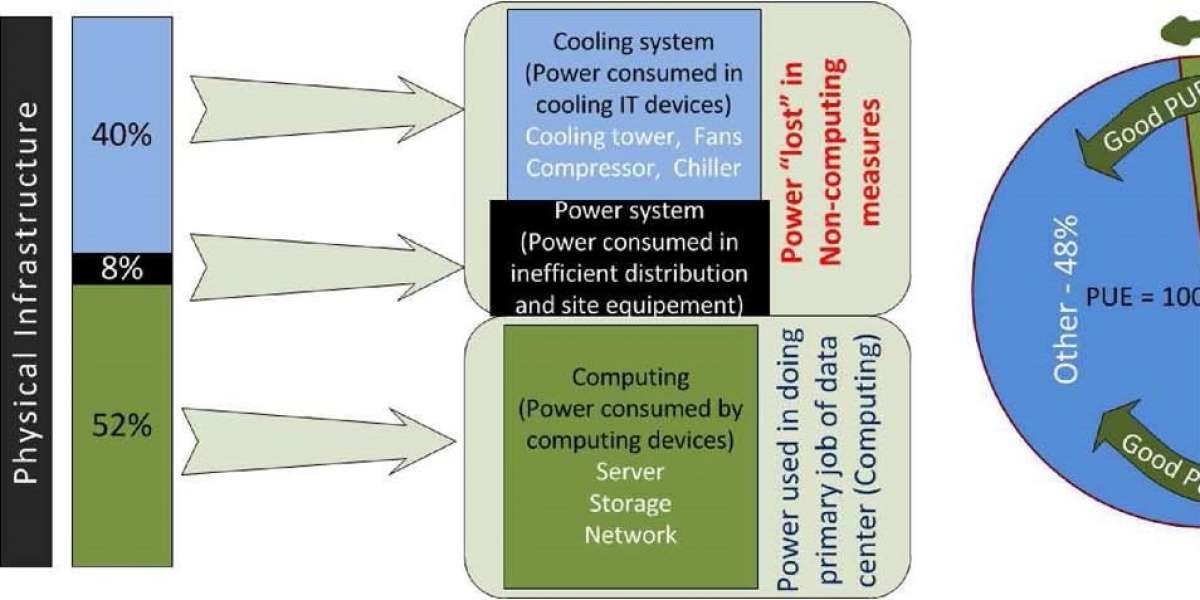

With the advent of several cloud-based services, the need for data storage and data availability is higher than ever. To meet this ever-increasing demand, we started setting up data centres. CISCO defines a data centre as follows- “A data centre can be defined as a physical unit which is used to store important information and data. Its design is purely based on its defined capacity to handle the network traffic and the amount of data that can be stored and transported to the clients who request [8]”. These data centres are built with a certain set of specifications [1] to meet the demands. A few of the important specifications that most data centres adhere to is low network latency, easy implementation of the system, the good extent of scalability, high availability of resources and downtime of almost zero, cooling infrastructure and a mechanism to avoid over-heating issues [1]. To meet these specifications, the components used must be of the highest quality and must be redundant. Based on an article in the Forbes Magazine, data centres across the United States consume nearly 90 billion kilowatt-hours of electricity in a year [4]. This information shows us that data centres do consume a lot of energy and also contribute to the emission of greenhouse gases. Figure1 gives us information on how power is distributed in a typical data centre [2]. It shows that almost 40% of the power is consumed to cool the systems down and another 8% is lost in power distributions and equipment maintenance. From this, we can infer that only half or a little more of the power supplied to a data centre is utilized for computation. This depicts the power wastage in a typical data centre. Power Usage Efficiency (PUE) is a factor that tells us how efficient power consumption is. From the figure, it is clear that the data centre has a small PUE and to improve this factor, better implementations have to be made in the design of data centres.

Figure 1: Power Utilization in a Data Center

Based on the above-mentioned facts, we can conclude that there is an urgent need to design more energy-efficient data centres so that the costs come down as well as the green-house emissions stay within the permissible limits.

The CLEER Model

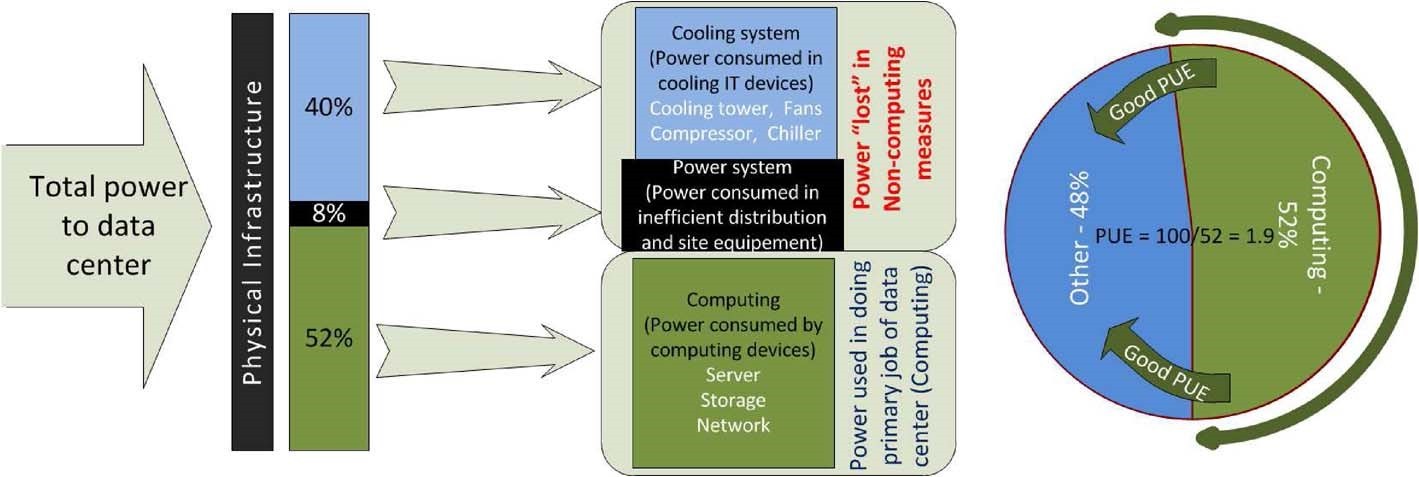

In this section, we will try to analyze the results of the CLEER model [3] and see exactly how the existing data-centres harm the environment. After discussing the CLEER model, we will also talk about renewable energy sources which can be used to power the data-centres for its daily operations. The CLEER (Cloud Energy and Emissions Research) Model was developed by Lawrence Berkley National Lab in collaboration with Northwestern University [5]. It is an open-source model and can be accessed by academicians or the general public to study the rates at which energy is being consumed by a data centre. Based on the documentation of the model [5], it uses a bottom-up framework to analyze the scenarios established by the user. The model can be successfully implemented by following three simple steps. The first step would be identifying a scenario. This implies that we must select a situation where we would want to perform this analysis. It could be a Data-center operations unit or a Network or Client IT Devices etc. where we select the operation the unit is performing. The second step is termed as Present-day and Cloud. In this step, we select the type of data-centre, the region it is located in, its power-supply, number of servers in the data-centre. The final step in this model will be the Results. The unit that is used to measure the energy is Terajoules.

We select a custom scenario for the purpose of this paper. Figure 2, indicates that I have selected the New York region and the number of servers. Other parameters, Carbon intensity and Primary Energy are set as default and their values are determined based on the region we select.

Figure 2: Input Parameters for a Data Center

Similarly, we set the default values for cloud, as the main aim is to compare between the two. The interface for the input parameters of a cloud centre is the same, all we change is the values of Number of Volume Servers to 5 and the Number of High-End Servers to 0. The graphical results for the same can be seen in Figure 3.

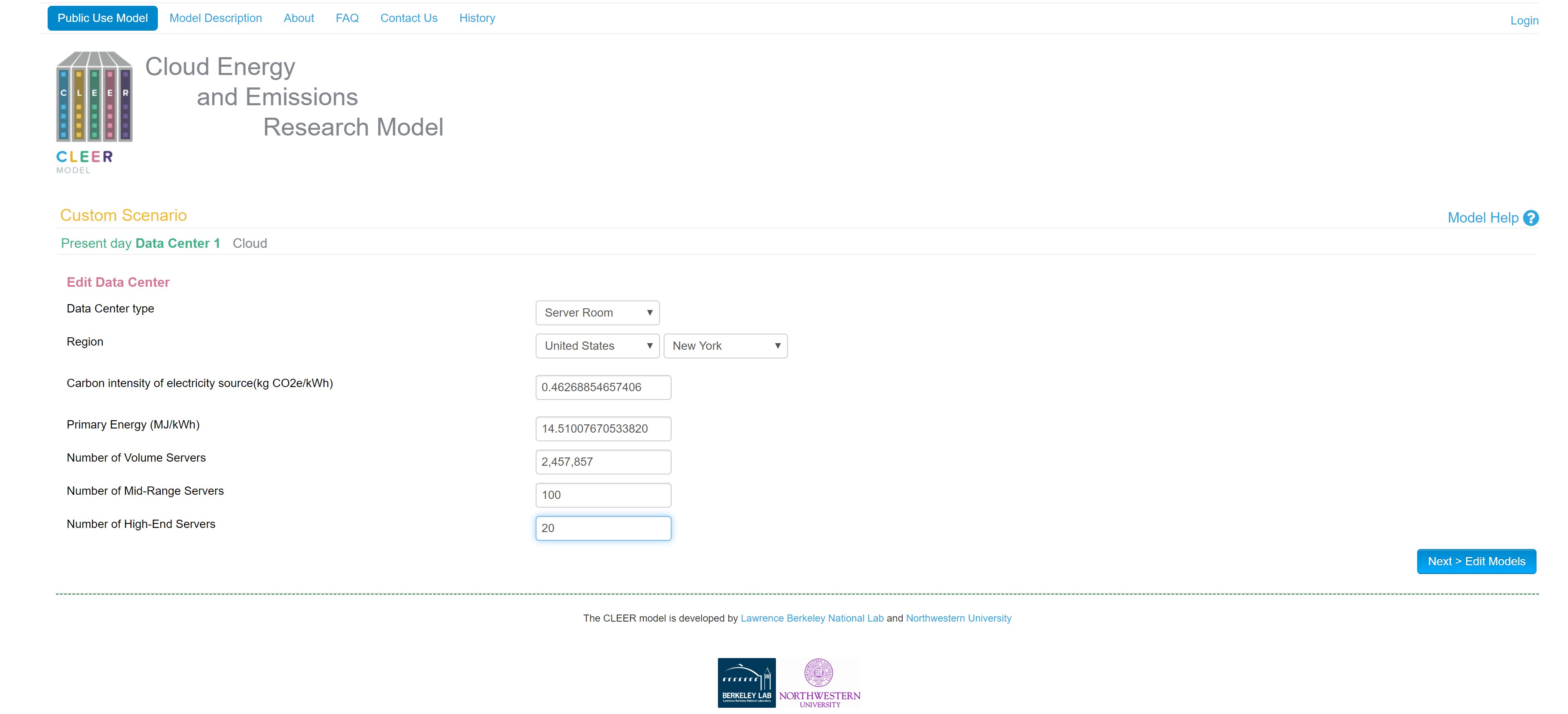

Figure 3: Graphical Comparison of energy consumption between data-centres and cloud

From Figure 3, it is clear that Data Center Operations, consume a lot of power whereas the Cloud consumes only a fraction of that. We can also see that energy consumption is high in the Cloud for operations like Network, Client IT Devices and Embodied Client IT. From this model, it is clear that we must find better ways to produce energy than using conventional means. After such analysis, the big companies decided to use natural energy sources like the Wind energy, Solar energy etc. to power their data centres [1]. Once, they started using renewable sources, the energy consumption by the data-centres has come down to a great extent.

Design Implementations

In this section we will discuss server architectures, power distributions for a server, cooling the server systems [2]. Apart from these technical aspects, we will also discuss the need for using energy-efficient equipment and the concept of virtualization in data centres [1].

a. Server Architectures

The most common architectures to be used are the Reduced Instruction Set Computer (RISC) and the Complex Instruction Set Computer (CISC). CISC architecture is more hardware-oriented and has a multi-clock instruction set. On the other hand, RISC is more software-oriented and has only a single-clock instruction set. For its single-clock feature, most smartphones and embedded systems use the RISC architecture [2]. The other architecture which is gaining a lot of traction is the System-on-Chip (SoC) architecture. A study on the power consumptions by various architecture models was performed by Li, Lim et al [6]. This study proves that SoC architecture consumes the least amount of power and is the most efficient architecture which could be used to design a data-centre. Based on that study, Energy efficiency for RISC architecture was 300-400% less than CISC architecture, but the SoC architecture fared better. Its energy efficiency was 35% less when compared to RISC architecture. Therefore, designing data centres based on SoC architecture is more environmentally friendly and more efficient when compared to designing the same with other architectures. This section recommends the usage of SoC architecture for the servers.

b. Power Distributions

The distribution of power for a data centre can be a very complex task. Power distribution could have been a very simple task had all the infrastructure ran on the same supply. But, that’s not the case, each component has its power rating and any supply more than the rating will damage the equipment. A typical medium-sized data centre would need 33KV of power supply [2]. We know that there is no equipment known to man that can handle such high power. Naturally, we use a step-down transformer to get the voltage levels down and then we connect it to an Uninterrupted Power Supply (UPS) so that even when there is a power outage, the systems get their power supply from the UPS. To reduce power consumption and maintain the required levels of power supply, there are several techniques to implement. One such methodology is the use of ‘Smart Grid’ [2]. Smart Grid can be defined as a system employing digital techniques which can discover voltage changes and supply them accordingly. In a smart grid, all the equipment involved are arranged in racks and each rack has an independent power source to it such that a loss of power for one rack does not affect the other. Pelley et al. [7], in their paper, discuss a method called ‘Shuffled Power Topologies’ which could be generalized as ‘Power routing’. From the description provided, in a power routing system, we have a central processor which controls all the power supplies to the server. This processor controls the rate at which power is supplied to a server. It can also handle power outages by connecting the server to another supply, in case of a reduction in supply from a particular source. This processor can also control voltage and current spikes which can damage the equipment. By using the proposed power routing, we can control the amount of power being supplied and this results in reduced costs and helps us reach the goal of designing a greener data centre. This section recommends the use of a power routing methodology.

c. Cooling

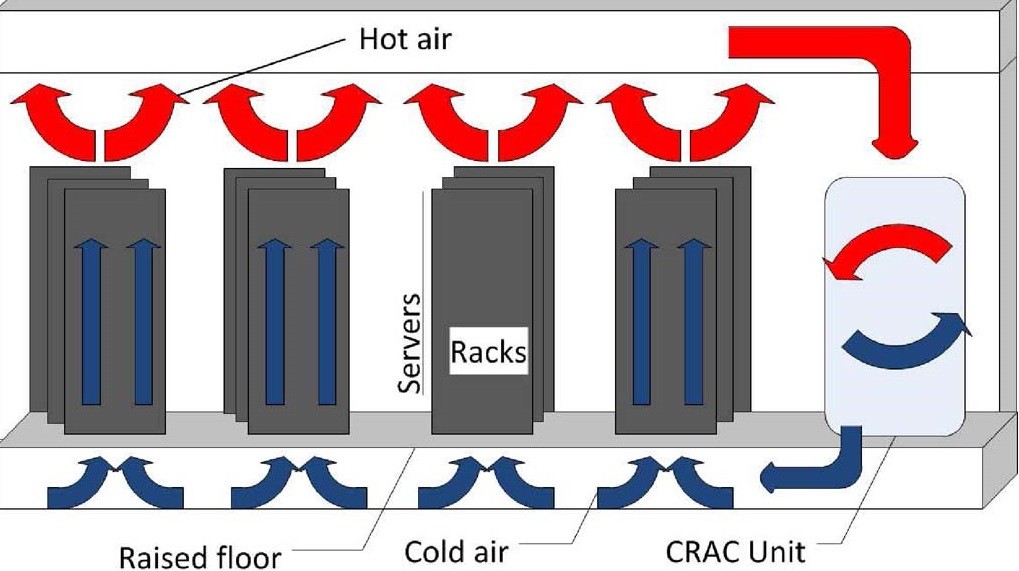

This section deals with the issues about cooling the server systems in a data centre. As shown in Figure 1, in Section I, cooling systems which contain Cooling towers, Fans, Compressor, Chiller etc., consume nearly 40% of the total power that is supplied to a data centre [2]. This percentage is on the higher side and we need to get this down to improve the efficiency of our data centre. We know for a fact that all equipment’s which do have a computing system in them, does generate heat. The removal of this generated heat contributes towards the efficiency and longevity of the system [2]. Data centres, in general, employ two methodologies to dissipate the heat generated by the servers. One method is based on the airflow in a data centre and the other involves the usage of a liquid solution which tries to lower the temperatures of the system by surrounding them with cooling pipes. The liquid solution method is considered to be a more effective method for heat removal than the airflow method. At the same time, the complexity involved in designing a data centre increases because now we should also take into consideration, the design of cooling pipes, making it leak-proof, having a constant flow throughout etc. [2]. In their analysis, J. Shuja et al. proposed the idea of using a Computer Room Air Conditioning (CRAC) units. As shown in Figure 4, CRAC units are designed in such a way that they collect all the hot air generated by the server racks through pipe inlets. Inside the CRAC unit, the hot air collected is dissipated and converted to cooler air. This cooler air is supplied to the servers through a pipeline which is located beneath the servers. We can also see that the servers are a little above the ground level so that such a mechanism can be implemented and the temperatures inside the data centre can be controlled. This mechanism allows us to maintain a uniform temperature inside the data centre, it can help us reduce the costs involved in cooling the systems and also improves the device reliability [2].

Figure 4: A representation of the CRAC unit [2].

d. Power-aware Routing Algorithm and Virtualization

This section deals with routing algorithms and the concept of virtual machines which can reduce power consumption and help us achieve our goal for a green data centre. Routing algorithms are the main blocks for network traffic and packet distributions in a server. There is a need for us to use algorithms which are power sensitive. Algorithms which have been developed earlier like the distance-vector routing, link-state routing and path to vector routing do not power sensitive and a considerable amount of energy is lost implementing these algorithms. E. Baccour et al., in their analysis, talk about an algorithm termed as ‘Elastic Tree’, which is a power-sensitive algorithm [1]. It is a dynamic algorithm which can adjust itself based on the energy a data centre consumes. This algorithm has three main components. First is the Optimizer Module. As the name suggests, the optimizer tries to find the lowest possible power supply that can be used for a particular operation. Second is the Routing Module. This section of the algorithm tries to find the shortest distance to send data from the source to its destination. The third aspect of the algorithm is the Power Module. This is responsible for shutting the system off when not in use. This helps us to conserve energy. We can employ the Elastic Tree algorithm in designing a data centre.

Virtualization is another concept which can help us not only conserve energy but also save space. We can set up several Virtual Machines (VM) on a single physical server [1]. This concept can be really helpful in saving space, as it is more convenient to set up multiple VM’s on the same server than dedicating individual resources to each machine that is being set up. If each machine were to have a dedicated connection to the power source, that would draw a lot of power, which is not ideal. The main advantage of using VM’s is we allocate the same resources to multiple users and when a VM is not functional, we can shut it off and allocate the resources to other VM’s. Migration is a key aspect of VM’s. It is a process in which we can transfer a VM from one physical server to the other without disconnecting the system. All the VM’s contents, its storage is all moved. This dynamic allocation of resources helps us to conserve a lot of power.

Conclusion

In recent years, the growth of cloud computing has been staggering. There is a striking difference between the mode of delivery of IT services before and after the advent of cloud computing. Delivering services to a vast majority of clients has never been this easy. But the data centers which process a lot of these services consume large portions of electricity and are financially expensive to maintain huge data centers. Therefore, there is a growing demand to come up with design implementations for a green data center. Cloud computing business model aims at 99.99% availability of its services. The energy-saving techniques, however, would not be able to provide the same availability. In this paper, we have tried to analyze the energy consumption and carbon emission of a data center against a cloud data center. We also discussed the type of architecture that can be proposed to be more energy-efficient than the existing ones. The rest of the paper includes details regarding the best possible power distribution in a data center, the cooling methodologies that can be employed and also throws some light on the routing algorithms that can be implemented along with the concept of virtualization and its benefits.

References

[1] E. Baccour, S. Foufou, R. Hamila, and A. Erbad, “Green data center networks: a holistic survey and design guidelines,” 2019 15th International Wireless Communications Mobile Computing Conference (IWCMC), 2019, pp. 1108-1114

[2] J. Shuja, K. Bilal, S. A. Madani, M. Othman, R. Ranjan, P. Balaji, and S. U. Khan, “Survey of Techniques and Architectures for Designing Energy-Efficient Data Centers,” IEEE Systems Journal, vol. 10, no. 2, pp. 507–519, 2016

[3] Xueying Yu, Yingqiao Ma, Jingjing Li, "Analysis and Research on Green Cloud Computing," 2018 2nd IEEE Advanced Information Management, Communicates, Electronic and Automation Control Conference (IMCEC 2018), 2018, pp. 521-524

[4] Radoslav Danilak, “Why Energy Is A Big And Rapidly Growing Problem For Data Centers”, https://www.forbes.com/sites/forbestechcouncil/2017/12/15/why-energy-is-a-big-and-rapidly-growing-problem-for-data-centers/#1e55625c5a30, Accessed on: 29th September 2019, December 15th, 2017.

[5] Lawrence Berkeley National Laboratory and Northwestern University 2013. Cloud Energy and Emissions Research Model [Online]. Available from http://cleermodel.lbl.gov. Accessed on 1st October,2019.

[6] S. Li, K. Lim, P. Faraboschi, J. Chang, P. Ranganathan, and N. Jouppi, “System-level integrated server architectures for scale-out datacenters,” in Proc. 44th Annual IEEE/ACM Int. Symp. Microarchitetct. , Dec. 2011, pp. 260-271.

[7] S. Pelley, D. Meisner, P. Zandevakili, T. Wenisch, and J. Underwood, “Power routing: Dynamic power provisioning in the data center,” ACM Sigplan Notices, vol.45, no.3, pp. 231-242, Mar. 2010.

[8]Cisco, “https://www.cisco.com/c/en/us/solutions/data-center-virtualization/what-is-a-data-center.html”.