Abstract

Amazon Web Services offers a broad set of global cloud-based products including compute, storage, databases, analytics, networking, mobile, developer tools, management tools, IoT, security, and enterprise applications: on-demand, available in seconds, with pay-as-you-go pricing. From data warehousing to deployment tools, directories to content delivery, over 175 AWS services are available. New services can be provisioned quickly, without the upfront capital expense. This allows enterprises, start-ups, small and medium-sized businesses, and customers in the public sector to access the building blocks they need to respond quickly to changing business requirements. In 2006, Amazon Web Services (AWS) began offering IT infrastructure services to businesses as web services—now commonly known as cloud computing. One of the key benefits of cloud computing is the opportunity to replace upfront capital infrastructure expenses with low variable costs that scale with your business. With the cloud, businesses no longer need to plan for and procure servers and other IT infrastructure weeks or months in advance. Instead, they can instantly spin up hundreds or thousands of servers in minutes and deliver results faster. Today, AWS provides a highly reliable, scalable, low-cost infrastructure platform in the cloud that powers hundreds of thousands of businesses in 190 countries around the world. (AWS whitepaper: Overview of AWS-Aug 2020)

Infrastructure as Code has emerged as a best practice for automating the provisioning of infrastructure services. This article describes the benefits of Infrastructure as Code, and how to leverage the capabilities of Amazon Web Services in this realm to support DevOps initiatives. DevOps is the combination of cultural philosophies, practices, and tools that increases your organization’s ability to deliver applications and services at high velocity. This enables your organization to be more responsive to the needs of your customers. The practice of Infrastructure as Code can be a catalyst that makes attaining such a velocity possible. All these principles of AWS, their analysis, the importance of Snowball, it’ flaws, how it should be managed, and the financial analysis of that operating plan is detailed in this article. (AWS whitepaper: Infrastructure as Code-July 2017)

AWS CloudFormation gives you an easy way to model a collection of related AWS and third-party resources, provision them quickly and consistently, and manage them throughout their lifecycles, by treating infrastructure as code. A CloudFormation template describes your desired resources and their dependencies so you can launch and configure them together as a stack. You can use a template to create, update, and delete an entire stack as a single unit, as often as you need to, instead of managing resources individually. You can manage and provision stacks across multiple AWS accounts and AWS Regions.

Analysis of the company: Company History, Status Quo, Mission, Vision, Values

- History: Publicly launched on March 19, 2006, AWS offered Simple Storage Service (S3) and Elastic Compute Cloud (EC2), with Simple Queue Service (SQS) following soon after. By 2009, S3 and EC2 were launched in Europe, the Elastic Block Store (EBS) was made public, and a powerful content delivery network (CDN), Amazon CloudFront, all became formal parts of AWS offering. These developer-friendly services attracted cloud-ready customers and set the table for formalized partnerships with data-hungry enterprises such as Dropbox, Netflix, and Reddit, all before 2010.

- Status Quo: As of 2020, AWS comprises more than 175 products and services:including computing, storage, networking, database, analytics, application services, deployment, management, mobile, developer tools, and tools for the Internet of Things. The most popular include Amazon Elastic Compute Cloud (EC2), Amazon Simple Storage Service (Amazon S3), Amazon Connect, and AWS Lambda (a serverless function enabling serverless ETL e.g. between instances of EC2 S3). Most services are not exposed directly to end users, but instead offer functionality through APIs for developers to use in their applications. Amazon Web Services' offerings are accessed over HTTP, using the REST architectural style and SOAP protocol for older APIs and exclusively JSON for newer ones.

- Amazon Mission: "Amazon is guided by four principles: customer obsession rather than competitor focus, passion for invention, commitment to operational excellence, and long-term thinking. Customer reviews, 1-Click shopping, personalized recommendations, Prime, Fulfillment by Amazon, AWS, Kindle Direct Publishing, Kindle, Fire tablets, Fire TV, Amazon Echo, and Alexa are some of the products and services pioneered by Amazon."

- Amazon Vision: Our vision is to be earth's most customer-centric company; to build a place where people can come to find and discover anything they might want to buy online.

- Amazon Values:

- Customer Obsession

- Ownership

- Invent and Simplify

- Learn and Be Curious

- Hire the Best

- The Highest Standards

- Think Big

- Bias for Action

- Earn Trust

- Deliver Results

Benefits of AWS Cloud Computing

Low Cost- AWS offers low, pay-as-you-go pricing with no up-front expenses or long-term commitments. We are able to build and manage a global infrastructure at scale, and pass the cost saving benefits onto you in the form of lower prices. With the efficiencies of our scale and expertise, we have been able to lower our prices on 15 different occasions over the past four years.

Agility and Instant Elasticity- AWS provides a massive global cloud infrastructure that allows you to quickly innovate, experiment and iterate. Instead of waiting weeks or months for hardware, you can instantly deploy new applications, instantly scale up as your workload grows, and instantly scale down based on demand. Whether you need one virtual server or thousands, whether you need them for a few hours or 24/7, you still only pay for what you use.

Open and Flexible- AWS is a language and operating system agnostic platform. You choose the development platform or programming model that makes the most sense for your business. You can choose which services you use, one or several, and choose how you use them. This flexibility allows you to focus on innovation, not infrastructure.

Secure- AWS is a secure, durable technology platform with industry-recognized certifications and audits: PCI DSS Level 1, ISO 27001, FISMA Moderate, FedRAMP, HIPAA, and SOC 1 (formerly referred to as SAS 70 and/or SSAE 16) and SOC 2 audit reports. Our services and data centers have multiple layers of operational and physical security to ensure the integrity and safety of your data.

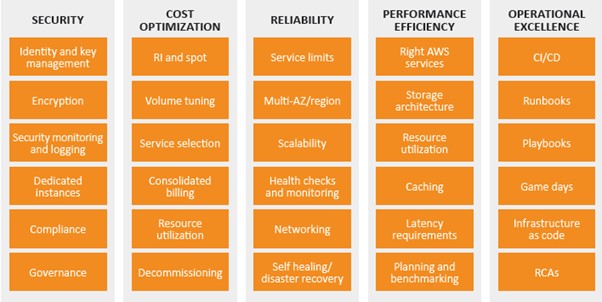

Well Architected Framework- 5 pillars

Back in 2015 launched AWS Well-Architected to make sure that you have all of the information that you need to do this right. The framework is built on five pillars:

- Operational Excellence– The ability to run and monitor systems to deliver business value and to continually improve supporting processes and procedures.

- Security – The ability to protect information, systems, and assets while delivering business value through risk assessments and mitigation strategies.

- Reliability– The ability of a system to recover from infrastructure or service disruptions, dynamically acquire computing resources to meet demand, and mitigate disruptions such as misconfigurations or transient network issues.

- Performance Efficiency– The ability to use computing resources efficiently to meet system requirements, and to maintain that efficiency as demand changes and technologies evolve.

- Cost Optimization– The ability to run systems to deliver business value at

the lowest price point.

SWOT Analysis (AWS)

Strengths- AWS was the first to offer public IaaS, as far back as 2006. Since they’ve been doing this the longest, it shouldn’t be surprising that one of Amazon’s key strengths is the maturity of its IaaS offerings and the entire AWS ecosystem for that matter. AWS has an almost-overwhelming amount of different services available, from DNS routing to caching to load balancers: virtually anything you could ever need out of the cloud, AWS can deliver. Additionally, AWS has the most data centers of any IaaS provider, which means they have the most comprehensive global coverage and the most robust, reliable network. These two key strengths are often the main draw to AWS for many customers: it’s almost guaranteed that you can do what you need to do on AWS, no matter how obscure, and it will offer suitable reliability for even the most sensitive applications.

Weaknesses-The maturity of the AWS ecosystem has, ironically, proven to be a con to some, as the huge number of options users are presented after starting their first instance can be a little overwhelming. The AWS environment is so vast, in fact, many businesses find that employees may require focused training on the AWS ecosystem before they’re able to properly manage AWS services. Additionally, some people have called AWS’ focus specifically on the public cloud, a weakness. AWS doesn’t have a specific “hybrid cloud” solution (other than their GovCloud solutions, which are mainly focused on the needs of public sector clients). Since hybrid cloud architectures are the dominant model for most large enterprises, many larger companies have been forced to look to other vendors like Microsoft which better address that need. In some cases, the need for a true hybrid cloud setup can be subverted by using a solution like AWS Storage Gateway, which enables companies to seamlessly extend their on-premises data storage into the cloud. (Palmira, Sophia- June 25, 2018)

Opportunities- The attractive pricing of AWS will make its services attractive to customers. Strength of AWS, which will make the proposition attractive, is that its tools enable customers to gain visibility of current and future spending. AWS Trusted Advisor capability is an example of a tool to enable customers to optimize their spending on AWS. Capabilities such as this provide AWS with the opportunity to increase take-up. The AWS Marketplace is a large and rapidly growing business area, with more than 7,000 software listings from 1,500 ISVs. The other key capability of the AWS marketplace is the growth of market vertical solutions and new technologies such as AI/ML. AWS customers can also subscribe to a diverse selection of third-party data in AWS Marketplace with newly launched services AWS Data Exchange.

Threats- AWS must continue to expand its offerings and grow its marketplace in order to stay ahead of the competition AWS a lead over its competitors in terms of its cloud offerings. However, Microsoft Azure is adopting a number of strategic partnerships that have expanded its offering to make them more appealing to the enterprise market. It has also won a number of significant customers in key market verticals, particularly in the retail sector where AWS’s parent company Amazon is seen as a competitor. AWS must continue to grow its marketplace and the capabilities it offers to stay ahead of Microsoft and the rest of the competition. (Roy Illsley, Omdia- May 7,2020)

Manual Infrastructure Provisioning

In the past, setting up IT infrastructure has been a very manual process. Humans have to physically rack and stack servers. Then this hardware has to be manually configured to the requirements and settings of the operating system used and application that’s being hosted. Finally, the application has to be deployed to the hardware. Only then can your application be launched.

There are many drawbacks to this manual process:

- It can take a long time to acquire the necessary hardware. You’re at the mercy of the hardware manufacturer’s production and delivery schedules. And if you need products customized for specific requirements, it could take months to receive your order.

- People have to be hired to perform the tedious setup work. You’ll need network engineers to set up physical network infrastructure, storage engineers to maintain physical drives, and many others to maintain all of this hardware. That leads to more overhead, management, and costs.

- Real estate has to be acquired to build data centers to house all of this hardware. On top of that, you’ll have to maintain these data centers, which means paying maintenance and security employees, HVAC and electricity expenses, and many other costs. Achieving high availability of your applications is big problem as well.

- You would have to build a backup data center, which could double your real estate and other costs mentioned above. It would also take a long time to scale an application to accommodate high traffic. Because racking, stacking, and configuring servers is such a slow process, many applications would buckle under spikes in usage while this hardware was being set up. This would have a huge impact in your company’s ability to serve your customers and launch new products and services quickly.

- To account for these traffic spikes, you may have to provision more servers than you actually need on a daily basis. Thus, you’ll have servers that sit idle for large amounts of time, which will increase your costs for this unused capacity.

- Finally, because different people are manually deploying these servers, setups are bound to be inconsistent. This can lead to unwanted variance in configurations, which can be detrimental to how your applications run.

The advent of cloud computing addresses some of these problems. You no longer have to rack and stack servers, which alleviates all of the issues and costs that come with human capital and real estate. Also, you could spin up servers, databases, and other necessary infrastructure very quickly, which would address the scalability, high availability, and agility problems.

But the configuration consistency issue, where manual setup of cloud infrastructure can lead to discrepancies, still remains. That’s where Infrastructure as Code comes into play. (ThornTech, 2018)

“Infrastructure as code is an approach to managing IT infrastructure for the age of cloud, microservices and continuous delivery.” – Kief Morris, head of continuous delivery for ThoughtWorks Europe

IaC Definition and Benefits

Infrastructure as Code solves an age-old problem: setting up and configuration IT resources was an arduous, manual, error-prone process. Today it is possible to define a configuration file, and spin up IT resources automatically, consistently and predictably, from that file. On AWS, the CloudFormation service provides as Code capabilities. CloudFormation uses templates, configuration files defined in JSON or YAML syntax, that are human readable and can be easily edited, which you can use to define the resources you want to set up. CloudFormation reads a template and generates a stack, a set of resources ready to use on AWS.

So, by using CloudFormation, you can define anything from simple resources or complex multi-resource applications using templates and automatically deploy the resources on AWS. You can test your Infrastructure as Code by fine-tuning your configuration and repeating the process.

Benefits of IaC on AWS

The AWS approach to Infrastructure as Code has several advantages:

- High visibility—CloudFormation templates are just code—they can be viewed and edited with any text editor. They clearly state which resources will be created and defines their parameters, making it easy for everyone on your team to see and understand what is being deployed.

- Automated deployment and orchestration—CloudFormation takes a declarative approach, allowing you to declare the end result of your deployment, and performing the right set of operations to get you there. Even if you specify a complex multi-part application, there is no need for scripting or manual actions—CloudFormation can create a working stack fully automatically.

- Stability with version control—changes to templates can create unintended consequences, errors or service interruption. You can save your CloudFormation templates in a version control system, maintain a tested production version of your template, and if anything goes wrong, tear down the resources and revert to the tested, working template. CloudFormation also tests that a deployment was successful and if it detects errors, it rolls back gracefully to a last known good configuration.

- Reusability and scalability—AWS lets you deploy the same template as many times as you need. You can define and test a stack one time and then reuse it for many systems across your enterprise, or to scale up the same system by deploying it several times. This is also useful for AWS migration efforts—when migrating services to the cloud, it is often useful to start them up using CloudFormation templates. (Yifat Perry, Dec 2019)

Infrastructure as Code can simplify and accelerate your infrastructure provisioning process, help you avoid mistakes and comply with policies, keep your environments consistent, and save your company a lot of time and money. Your engineers can be more productive on focus on higher-value tasks. And you can better serve your customers. If IaC isn’t something you’re doing now, maybe it’s time to start!

Best Practices for IaC

- Codify everything- All infrastructure specifications should be explicitly coded in configuration files, such as AWS CloudFormation templates, Chef recipes, Ansible playbooks, or any other IaC tool you’re using. These configuration files represent the single source of truth of your infrastructure specifications and describe exactly what cloud components you’ll use, how they relate to one another, and how the entire environment is configured. Infrastructure can then be deployed quickly and seamlessly, and ideally no one should log into a server to manually make adjustments.

Codify all the infrastructure things!

- Document as little as possible- Your IaC code will essentially be your documentation, so there shouldn’t be many additional instructions for your IT employees to execute. In the past, if any infrastructure component was updated, documentation needed to be updated in lockstep to avoid inconsistencies. We know that this didn’t always happen. With IaC, the code itself represents the documentation of the infrastructure and will always be up to date. Additional documentation, such as diagrams and other setup instructions, may be necessary to educate those employees who are less familiar with the infrastructure deployment process. But most of the deployment steps will be performed by the configuration code, so this documentation should ideally be kept to a minimum.

- Maintain version control- These configuration files will be version-controlled. Because all configuration details are written in code, any changes to the codebase can be managed, tracked, and reconciled. Just like with application code, source control tools like Git, Mercurial, Subversion, or others should be used to maintain versions of your IaC codebase. Not only will this provide an audit trail for code changes, it will also provide the ability to collaborate, peer-review, and test IaC code before it goes live. Code branching and merging best practices should also be used to further increase developer collaboration and ensure that updates to your IaC code are properly managed.

- Continuously test, integrate, and deploy- Continuous testing, integration, and deployment processes are a great way to manage all the changes that may be made to your infrastructure code. Testing should be rigorously applied to your infrastructure configurations to ensure that there are no post-deployment issues. Depending on your needs, an array of test types – unit, regression, integration and many more – should be performed. Automated tests can be set up to run each time a change is made to your configuration code.

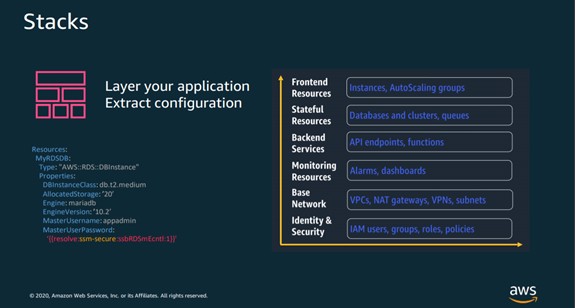

- Make your infrastructure code modular- Microservices architecture, where software is built by developing smaller, modular units of code that can be deployed independently of the rest of a product’s components, is a popular trend in the software development world. The same concept can be applied to IaC. You can break down your infrastructure into separate modules or stacks then combine them in an automated fashion. There are a few benefits to this approach. First, you can have greater control over who has access to which parts of your infrastructure code. For example, you may have junior engineers who aren’t familiar with or don’t have expertise of certain parts of your infrastructure configuration. By modularizing your infrastructure code, you can deny access to these components until the junior engineers get up to speed. Also, modular infrastructure naturally limits the amount of changes that can be made to the configuration. Smaller changes make bugs easier to detect and allow your team to be more agile. And if you use the microservices development approach, a configuration template can be created for each microservice to ensure infrastructure consistency. Then all of the microservices can be connected through HTTP or messaging interfaces. Security of your infrastructure should also be continuously monitored and tested.

- Make your infrastructure immutable (when possible)- The idea behind immutable infrastructure is that IT infrastructure components are replaced for each deployment, instead of changed in-place. You can write code for and deploy an infrastructure stack once, use it multiple times, and never change it. If you need to make changes to your configuration, you would just terminate that stack and build a new one from scratch. Making your infrastructure immutable provides consistency, avoids configuration drift, and restricts the impact of undocumented changes to your stack. It also improves security and makes troubleshooting easier due to the lack of configuration edits. While immutable infrastructure is currently a hotly-debated topic, we believe that you should try to make your infrastructure immutable whenever possible to increase the consistency of your configurations.(Thorn technologies, IaC handbook)

Configuration Orchestration VS. Configuration Management

Configuration orchestration tools, which include Terraform and AWS CloudFormation, are designed to automate the deployment of servers and other infrastructure. Configuration management tools like Chef, Puppet, and the others help configure the software and systems on this infrastructure that has already been provisioned. Configuration orchestration tools do some level of configuration management, and configuration management tools do some level of orchestration. Companies can and many times use both types of tools together.

Infrastructure As Code Tools

- Terraform- It is an infrastructure provisioning tool created by Hashicorp. It allows you to describe your infrastructure as code, creates “execution plans” that outline exactly what will happen when you run your code, builds a graph of your resources, and automates changes with minimal human interaction. Terraform uses its own domain-specific language (DSL) called Hashicorp Configuration Language (HCL). HCL is JSON-compatible and is used to create these configuration files that describe the infrastructure resources to be deployed. Terraform is cloud-agnostic and allows you to automate infrastructure stacks from multiple cloud service providers simultaneously and integrate other third-party services. You even can write Terraform plugins to add new advanced functionality to the platform.

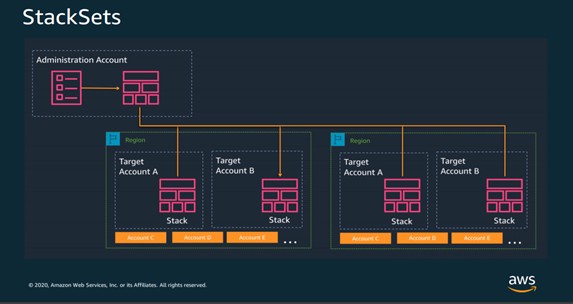

- AWS CloudFormation- Similar to Terraform, AWS CloudFormation is a configuration orchestration tool that allows you to code your infrastructure to automate your deployments. Primary differences lie in that CloudFormation is deeply integrated into and can only be used with AWS, and CloudFormation templates can be created with YAML in addition to JSON. CloudFormation allows you to preview proposed changes to your AWS infrastructure stack and see how they might impact your resources, and manages dependencies between these resources. To ensure that deployment and updating of infrastructure is done in a controlled manner, CloudFormation uses Rollback Triggers to revert infrastructure stacks to a previous deployed state if errors are detected. You can even deploy infrastructure stacks across multiple AWS accounts and regions with a single CloudFormation template.

- Azure Resource Manager and Google Cloud Deployment Manager- If you’re using Microsoft Azure or Google Cloud Platform, these cloud service providers offer their own IaC tools similar to AWS CloudFormation. Azure Resource Manager allows you to define the infrastructure and dependencies for your app in templates, organize dependent resources into groups that can be deployed or deleted in a single action, control access to resources through user permissions, and more. Google Cloud Deployment Manager offers many similar features to automate your GCP infrastructure stack. You can create templates using YAML or Python.

- Chef- Chef is one of the most popular configuration management tools that organizations use in their continuous integration and delivery processes. Chef allows you to create “recipes” and “cookbooks” using its Ruby-based DSL. These recipes and cookbooks specify the exact steps needed to achieve the desired configuration of your applications and utilities on existing servers. This is called a “procedural” approach to configuration management, as you describe the procedure necessary to get your desired state. Chef is cloud-agnostic and works with many cloud service providers such as AWS, Microsoft Azure, Google Cloud Platform, OpenStack, and more.

- Puppet- Similar to Chef, Puppet is another popular configuration management tool that helps engineers continuously deliver software. Using Puppet’s Ruby-based DSL, you can define the desired end state of your infrastructure and exactly what you want it to do. Then Puppet automatically enforces the desired state and fixes any incorrect changes. This “declarative” approach – where you declare what you want your configuration to look like, and then Puppet figures out how to get there – is the primary difference between Puppet and Chef. Also, Puppet is mainly directed toward system administrators, while Chef primarily targets developers. Puppet integrates with the leading cloud providers like AWS, Azure, Google Cloud, and VMware, allowing you to automate across multiple clouds.

- Ansible- It is an infrastructure automation tool created by Red Hat, the huge enterprise open source technology provider. Ansible models your infrastructure by describing how your components and system relate to one another, as opposed to managing systems independently. Ansible doesn’t use agents, and its code is written in YAML in the form of Ansible Playbooks, so configurations are very easy to understand and deploy. You can also extend Ansible’s functionality by writing your own Ansible modules and plugins.

- Docker- It helps you easily create containers that package your code and dependencies together so your applications can run in any environment, from your local workstation to any cloud service provider’s servers. YAML is used to create configuration files called Dockerfiles. These Dockerfiles are the blueprints to build the container images that include everything – code, runtime, system tools and libraries, and settings – needed to run a piece of software. Because it increases the portability of applications, Docker has been especially valuable in organizations who use hybrid or multi-cloud environments. The use of Docker containers has grown exponentially over the past few years and many consider it to be the future of virtualization

AWS CloudFormation

CloudFormation allows you to define configuration for Infrastructure as Code, by directly editing template files, via the CloudFormation API, or the AWS CLI. CloudFormation is a free service—Amazon only charges for the services you provision via templates.

The following diagram illustrates the CloudFormation process. You create templates and save them in an S3 bucket. Then CloudFormation reads the template and creates a stack based on template definitions.

Source: Amazon Web Services

Managing template changes

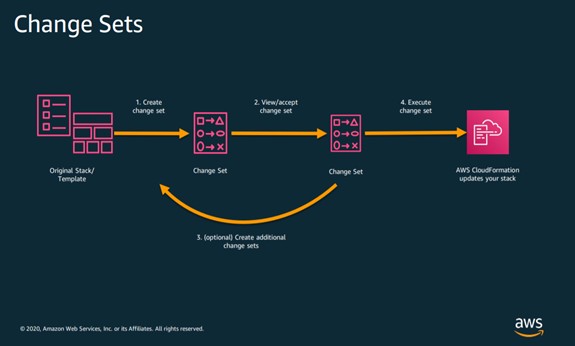

CloudFormation recognizes that a template has been edited and creates a change set, which specifies what needs to be changed in the resources you have provisioned, to reflect the changes in the template. Once you approve the change set, it is executed, and the resources are automatically modified.

Anatomy Of A CloudFormation Template

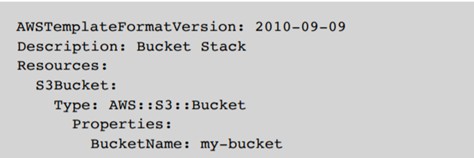

- Here’s a super simple CloudFormation template that creates an S3 bucket:

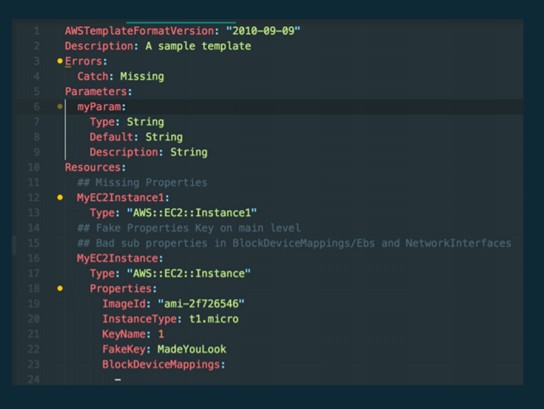

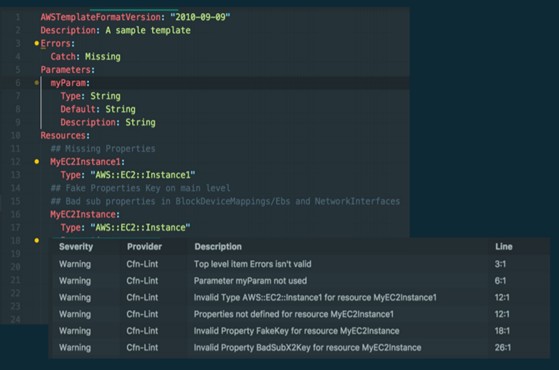

You can create CloudFormation templates using JSON or YAML. We prefer the latter, so all the templates included in this article are in YAML. Regardless of which you choose, templates can consist of the primary sections of information highlighted below, with the Resources section being the only one that is mandatory. This is the AWS CloudFormation template version that your template conforms to and identifies the capabilities of the template. This is not the same as the API or WSDL version. At the moment, the latest and only valid template version is 2010-09-09. While there is only one valid version at the moment and this field is optional, it’s a good idea to include it in your templates for future reference.

DESCRIPTION (OPTIONAL)- This section is a text string that provides the reader with a short description (0 to 1024 bytes in length) of the template. This section must come right after the Format Version section. Be descriptive but concise!

METADATA (OPTIONAL)- This section isn’t used all that often, but here you can include additional information about your template. This can include information for third-party tools that you may use to generate and modify these templates and other general data. Please note that the Metadata section is not to be confused with the Metadata attribute that falls under the Resources section. This Metadata section should include information about the template overall, while the Metadata attribute should include data about a specific resource.

PARAMETERS (OPTIONAL)- You can use the Parameters section to input custom values to your template when you create or update a stack so you can make these templates portable for use in other projects. For instance, you can create parameters that specify the EC2 instance type to use, an S3 bucket name, an IP address range, and other properties that may be important to your stack. The Resources and Output sections often refer to Parameters, and these references must be included within the same template.

MAPPINGS (OPTIONAL)- The Mappings section allows you to create key-value dictionaries to specify conditional parameter values. Examples of this include deploying different AMIs for each AWS region, or mapping different security groups to Dev, Test, QA, and Prod environments that otherwise share the same infrastructure stack. To retrieve values in a map, you use the “FN::FindInMap” intrinsic function in the Resources and Outputs sections.

CONDITIONS (OPTIONAL)- Conditions allow you to use logic statements (just like an “if then” statement) to declare what should happen under certain situations. We mentioned an example above about Dev, Test, QA, and Prod environments. In this case, you can use conditions to specify the type of EC2 instance to deploy in each of these environments. If the environment is Prod, you can set the EC2 instance to be m4.large. If the environment is Test, you can set it to be t2.micro to save money.

TRANSFORM (OPTIONAL)- The Transform section allows you to simplify your CloudFormation template by condensing multiple lines of resource declaration code and reusing template components. There are two types of transforms that CloudFormation supports: • “AWS::Include” refers to template snippets that reside outside of the main CloudFormation template you’re working with. Thus, you can make multi-line resource declarations in YAML or JSON files stored elsewhere and refer to them with a single line of code in your primary CloudFormation template. • “AWS::Serverless” specifies the version of the AWS Serverless Application Model (SAM) to use and how to process it. You can declare multiple transforms in a template and CloudFormation executes them in the order specified. You can also use template snippets across multiple CloudFormation templates.

RESOURCES (REQUIRED)- The Resources section is the only section that is required in a CloudFormation template. In this section, you declare the AWS resources, such as EC2 instances, S3 buckets, Redshift clusters, and others, that you want deployed in your stack. You also specify the properties, such as instance size, IAM roles, and number of nodes, for each of these components. This is the section that will take up the bulk of your templates.

OUTPUTS (OPTIONAL)- In the Outputs section, you’ll describe the values that are returned when you want to view the properties of your stack. You can export these outputs for use in other stacks, or simply view them on the CloudFormation console or CLI as a convenient way to get important information about your stack’s components.(Thorn technologies, IaC handbook)

Core Artifacts: Change Sets, Stacks Stack sets

The Infrastructure Resource Lifecycle

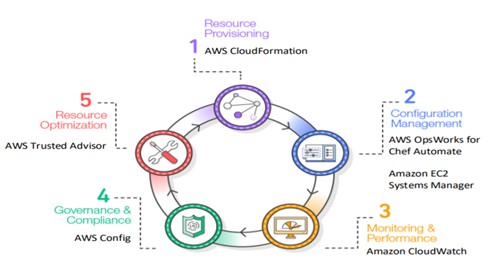

Figure illustrates a common view of the lifecycle of infrastructure resources in an organization. The stages of the lifecycle are as follows:

- Resource provisioning- Administrators provision the resources according to the specifications they want.

- Configuration management- The resources become components of a configuration management system that supports activities such as tuning and patching.

- Monitoring and performance- Monitoring and performance tools validate the operational status of the resources by examining items such as metrics, synthetic transactions, and log files.

- Compliance and governance- Compliance and governance frameworks drive additional validation to ensure alignment with corporate and industry standards, as well as regulatory requirements.

- Resource optimization- Administrators review performance data and identify changes needed to optimize the environment around criteria such as performance and cost management. Each stage involves procedures that can leverage code.

This extends the benefits of Infrastructure as Code from its traditional role in provisioning to the entire resource lifecycle. Every lifecycle then benefits from the consistency and repeatability that Infrastructure as Code offers. This expanded view of Infrastructure as Code results in a higher degree of maturity in the Information Technology (IT) organization as a whole.

AWS CloudFormation comes under resource provisioning.

Conclusion

Infrastructure as Code enables you to encode the definition of infrastructure resources into configuration files and control versions. We can now update our lifecycle diagram and show how AWS supports each stage through code.

Information resource lifecycle with AWS AWS CloudFormation, AWS OpsWorks for Chef Automate, Amazon EC2 Systems Manager, Amazon CloudWatch, AWS Config, and AWS Trusted Advisor enable you to integrate the concept of Infrastructure as Code into all phases of the project lifecycle. By using Infrastructure as Code, your organization can automatically deploy consistently built environments that, in turn, can help your organization to improve its overall maturity.

Next Steps

You can begin the adoption of Infrastructure as Code in your organization by viewing your infrastructure specifications in the same way you view your product code. AWS offers a wide range of tools that give you more control and flexibility over how you provision, manage, and operationalize your cloud infrastructure. Here are some key actions you can take as you implement Infrastructure as Code in your organization:

- Start by using a managed source control service, such as AWS CodeCommit, for your infrastructure code.

- Incorporate a quality control process via unit tests and static code analysis before deployments.

- Remove the human element and automate infrastructure provisioning, including infrastructure permission policies.

- Create idempotent infrastructure code that you can easily redeploy.

- Roll out every new update to your infrastructure via code by updating your idempotent stacks. Avoid making one-off changes manually.

- Embrace end-to-end automation.

- Include infrastructure automation work as part of regular product sprints.

- Make your changes auditable, and make logging mandatory.

- Define common standards across your organization and continuously optimize.

By embracing these principles, your infrastructure can dynamically evolve and accelerate with your rapidly changing business needs.

Bibliography

Infrastructure as Code- AWS Whitepaper, July, 2017, https://d1.awsstatic.com/whitepapers/DevOps/infrastructure-as-code.pdf?did=wp_cardtrk=wp_card

“Infrastructure as Code Handbook” , 2018

https://www.thorntech.com/wp-content/uploads/2018/10/Infrastructure-as-Code-Handbook-Final.pdf?utm_source=getresponseutm_medium=emailutm_campaign=thorn_tech_mobile_tech_subsutm_content=Infrastructure+as+Code+eBook+-+here%27s+your+download+link%21

“Amazon Web Services.” Wikipedia, Wikimedia Foundation, 13 Apr. 2020, en.wikipedia.org/wiki/Amazon_Web_Services

Infrastructure as Code, AWS Solutions Best Practices. APR 20,2020

https://pages.awscloud.com/rs/112-TZM-766/images/2020_0403-DEV_Slide-Deck.pdf

AWS Documentation, Well Architected Framework,