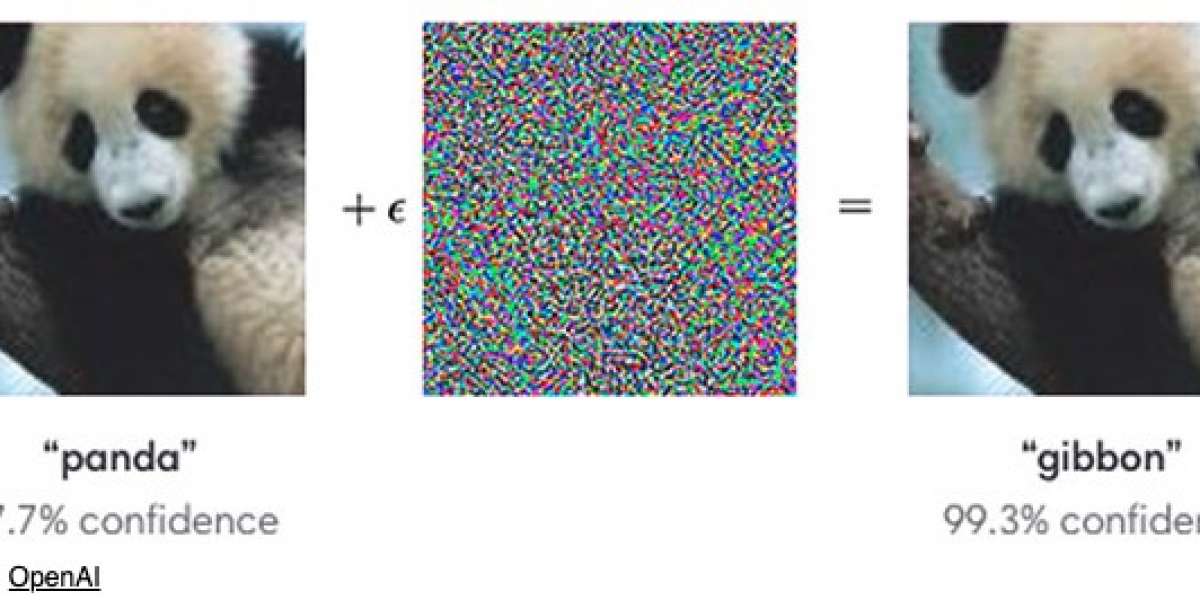

An overview of Adversarial Attacks and Defenses | #adversarialattack #machinelearning #imageclassification

Mi piace

Commento

Condividi

- La gente si consiglia di rispettare

- Latest Jobs

- Tendenza !

- #business 17 messaggi

- #usaserviceit 15 messaggi

- #seo 15 messaggi

- #digitalmarketer 15 messaggi

- #usaaccounts 15 messaggi