Abstract

The main purpose of the project is to set up a machine learning workflow and train the data analysts in the process, so that they can replicate in many other programs to save the cost of the organization. This initiative is a real time project where the organization would be providing the bed nets to the people of Nigeria. Here, the organization has collected the profiles of households via door to door survey for the distributions of bed nets. The process of distribution begins with organization providing net cards based on the number of people living in a household. These net cards should be redeemed at a distribution point in exchange for bed nets.For this initiative, I am helping in predicting the redemption of bed nets by a household in Nigeria by classification algorithms. The analytical approach to the model uses logistic regression, random forest and XG-Boost machine learning models. These models are created using python environment and Power BI for data visualizations. The model results in classifying the redemption of a household. This well-advanced prediction will help the organization in knowing the number of bed nets to be ready with, which in turn avoids the loss for the organization. Higher the redemption rate, lesser is the loss for the organization and thereby improving the livelihood of the people in Nigeria. The model would be deployed on the Microsoft Azure platform for the sake of the data analysts to use and improve the model. Here, the organization seeks to standardize this process, so that the use of machine learning can be replicated in many programs.

- Introduction

Problem Statement:

Predicting the redemption of the bed nets in Nigeria region so that spread of malaria is decreased. By this prediction, the organization will know in advance which household will redeem the bed nets and which will not. This numbers will help in saving the cost of the organization spend on the bed net distribution.

Hypothesis:

There is a huge amount of data and also it is a high frequency data, it will be very difficult to work on it. So, for that I need to clean the data and preprocess it as well. Here there are two different datasets which needs to be merged, one is the Nigeria household dataset and the other is the query time redemption dataset. Next step would be analyzing the datasets and building the machine learning models for better redemption of bed nets. The last step would be performing some visualizations and exploratory data analysis for better understanding of the data.

Assumptions:

I would be working on the dataset provided for the Nigeria region. The main objective of our project is to develop a model using the Nigeria dataset so that this model can be used for future projects such as Benin project. Few other assumption or problem statement I could define are if the head of the household is employed or not, and if this factor is affecting the well-being of all the members in the household. The distance of the base camp may be slightly far off from the houses which is preventing people from claiming their bed nets. The other potential assumption for not collecting the bed nets is its risk of catching fire and reported death incidents. (Egrot, et al., 2014).

1.1 Project Objectives

The main objective of our project is to develop a model using the Nigeria dataset so that this model can be used for future projects such as Benin and other countries. Our objective is to understand the factors affecting the non-redemption of bed nets and considering the local environment by using ML where it helps to perform Exploratory Data Analysis. I am helping the organization to set up a machine learning workflow and train the data analysts in the process of replicating the same protocol of MIRA Project to the Nigeria project, so that they can repeat it. Also, to serve as a contact point for advice for organization in case problems arise.

- Data Acquisition

2.1 Overview:

I have been provided with 2 datasets, one is Nigeria household data set and other data is QryTimeRedemption. Nigeria household data set contains data about the households and the distribution points. This is the major dataset. QryTimeRedemption data set contains 33789 data points and 6 columns. QryTimeRedemption data set contains interviewer id, date of registration, average minutes spent per household, number of cards redeemed by an interviewer_id, number of cards (total no cards for an interviewer_id) and household redemption rate per an interviewer_id.

Nigeria Data

Nigeria household data set contains 1362905 million data points and 19 columns

distributionPoint | Distribution point of bed nets |

date | Date of distribution |

state | State where the households belong |

lga | Local government area |

ward | Subset area of lga |

hh_id | Household id |

noOfCards | Total number of cards given to a household |

latitude | Latitude of household |

longitude | Longitude of household |

Origin | Location of household |

interviewer_id | Id of interviewer |

dp_longitude | Distribution point longitude |

dp_latitude | Distribution point latitude |

Destination | Location of destination |

hh_redemption_rate | Redemption rate of bednets in household |

distanceFromDPinMiles | Distance of household from distribution point in miles |

noOfCardRedeemed | Total number of cards redeemed by household |

Overall_redemption_rate | Overall redemption rate |

average_rates_lga | Average redemption rate with respect rate to lga |

average_minutes_per_hh | Average minutes per household spent by an interviewer |

2.2 Data Context

This data is collected from Nigeria in Africa, by performing a door to door collection to collect the data. It provides the dates on which the bed net has been distributed, latitude, longitude data of households, interviewer id, number of cards and number of cards redeemed.

- a) What has affected the dataset during the lifetime?

Since this is a high frequency data collection on a monthly basis, there is no such effect on the data

- b) When – time with respect that dataset has occurred?

April 2018

- c) Where – location of the dataset?

Nigeria, country in Africa continent.

- d) How was the dataset collected?

Survey Data

- e) Why – the reasons for the dataset?

To reduce the spread of malaria in Nigeria region by providing the insectiside bed nets.

2.3 Data Conditioning

- Data conditioning is a very important aspect for improving the quality of the data, so that it can be used for ML model for improving its productivity.

- I have linked the two datasets i.e. Nigeria household and QryTimeRedemption datasets with a common field(column) interviewer_id and date. I linked using left join on the Nigeria dataset. I took columns interviewer id, average minutes per household and date of registration from QryTimeRedemption.

- The target variable of our project is ‘Net_redemption’.

- There were features which were local to Nigeria and other unimportant features, so they were dropped. The variables are ‘distributionPoint', 'state', 'lga', 'ward', 'hh_id', 'latitude', 'longitude', 'Origin', 'interviewer_id', 'dp_longitude', 'dp_latitude', 'Destination', 'noOfCardRedeemed', 'Overall_redemption_rate', 'average_rates_lga' and 'date_of_registration'.

- There were null values present in average_minutes_per_hh column which was handled by using the mean of average_minutes_per_hh with respect to the particular lga(local government area).

- I also created a column size of household which is (noOfCards) * 2.

- For a better fit of the model, I have added the squares of columns, average_minutes_per_hh_SQR, distanceFromDPinMiles_SQR, size_of_hh_SQR thus, making it a polynomial model for improving the fit of the model.

2.4 Pre-Processing and Label Encoding

Data Preprocessing:

Nigeria dataset is used to train the model. The data consists of around 18 columns.

The categorical variables are removed such as follows.

- state, lga, ward

- Latitude, longitude

- dp_longitude, dp_latitude

- Origin, destination

- hh_id, interviewer_id

- noOfCardRedeemed'

- over_all_redemption_rate

- average_rates_lga

Date column has been modified and just extracted the day of the week such as Sunday, Monday etc. and one-hot-encoding was performed.

Outliers:

There were a couple of columns which had outliers, so had to preprocess those columns. One of the columns is average_minutes_per_hh, which is an average time taken by the interviewer per household on a certain date. In this column the minutes were ranging from 0 to 480, so all the outliers were removed where average_minutes_per_hh is greater than 90 minutes. Other such column was distance from distribution point in miles from a household. In this column I removed outliers where distance was more than 6 miles.

One-Hot Encoding:

Usually in machine learning, we have columns which contains one or more labels, these labels are converted into numeric form which will be easy for a machine to read. One of the columns is day of the week column, which has Sunday, Monday, etc., as variables which are one hot-encoded respectively, and it created six different columns in the dataset for representing each and every day of the week except one. But the final target variable is, hh_redemption_rate( household redemption rate)

Splitting the data:

As we are going to use the data for modeling, we have split the data into train and test datasets. The proportion which we used to split the data is 70% for train and 30% for test. So, after the split the size of train data is 1575289 rows and size of test data is 675125 rows with each having 12 columns, along with maintaining proportions of the class labels with stratify.

Data - Balancing: Binarizing the data

Since the output of the model, has household_redemption_rate 100% with 1M rows out of total 1.3M rows, 0.3M rows has rest of the values such as 0%, 25%, 33%, 67%, 75% etc. So, we have assigned the rows which had 100% as ‘1’ else it was assigned as ‘0’.

Smote using Oversampling:

As we discussed above about the imbalance in data. We have performed smote using oversampling. We can see that the values of 1 and 0 were not balanced before oversampling. But after performing oversampling we can see that the values of 1 and 0 are balanced and can further be used in modeling.

Multicollinearity:

After preprocessing the data, we did multi collinearity to check if there is any multicollinearity and got the above graph which tells us about the correlation between the variables present in the data. The distanceFromDPinMiles and the hh_redemption_rate has a corelation value of –0.041. Which means when the distance increases by 1 unit, the redemption rate decreases by 0.04 units.The correlations are low because the data is vastly spread across.

Variance Inflation Factor:

VIF stands for the Variance Inflation Factor. This is mainly use for measuring the variance of the estimated regression coefficients which are inflated to the predictor variables which are not linearly related. This whole is calculated by regressing each independent variable. Let us take the independent variable as X on the other independent variables let’s assume them as Y and Z and let us calculate how much of X is explained by the other two variables (Datalab, n.d.).

From the above formula, we can say that the higher the VIF value the higher will be the R squared value. By which we can say that the independent variable X is collinear with the other two variables Y and Z. We performed the Variance Inflation Factor test to check the multi-collinearity between the features. If the value is below 10, there is no problem of collinearity. But if it exceeds 10, there is a problematic amount of collinearity. A simple solution to multi-collinearity is to drop those problematic variables which exceeds 10.

None of the variables have VIF value greater than 5, which means there is no multicollinearity. In case any variable had a VIF value greater than 5, best thing is to drop these variables. After dropping, VIF can be calculated again for the remaining variables.

2.5 Exploratory Data Analysis of Nigeria Data:

Descriptive Statistics

- Pair Plot on the raw Data on few variables. The noOfCards column signifies the number of cards assigned to a family. This column contains discrete numeric values.

From the distanceFromDPInMiles graph, we can see the some of the outliers are in the range 2000 to 4000 miles and 6000 to 8000 miles.

The hh_redemption_rate shows a spike for 100, which signifies the count of households that have claimed their bednets.

The units of hh_redemption_rate is percentage and distanceFromDPinMiles is miles.

Pair Plot on significant variables after removing outliers from the distanceFromDPinMiles column.

Histogram

- Distribution of ‘hh_redemption_rate’ column. From the we can see the following distributions.

- Households with 100% redemption – 84.63%

- Households with 0% redemption –12.98%

- Households with partial redemption - 2.3%

The above figure gives the count of all the redemption rates. It shows that mainly the data is either 100 or 0. There are few partial redemptions.

- Distribution of ‘distanceFromDPinMiles’ column - We have considered a maximum of 6 miles distance between the distribution point and the house. Majorly the distance is within 1 mile.x`

The below bar plot shows the count of bed nets distributed, redeemed and not redeemed.

By default, we performed Pearson correlation on the variables, and we got a negative correlation of 0.041 between distance and hh_redemption_rate which is not that significant.

Spearman Rank Correlation between distance and redemption rate = -0.077

Kendall's tau correlation between distance and redemption rate = -0.0627

Spearman Rank Correlation has a better value than the other two correlations.

We grouped the rows by size of the household (Size of household = 2* noOfCards) and took the average of distanceFromDPinMiles, hh_redemption_rate and average_minutes_per_hh as shown in the screenshot below.

Based on the above data, we tried to do the correlation which gave better values.

distanceFromDPinMiles and hh_redemption_rate have a positive correlation of 0.54. That means as the distance increases, redemption rate increases by 0.54 units.

average_minutes_per_hh and hh_redemption_rate have a negative correlation of 0.65. That means as the average minutes per household is more, there are chances of redemption rate to be high by 0.65 units.

Here we did descriptive analysis of redemption rate with respect to lga. We found top 10 lga which have highest average redemption rate. We also found top 10 lga which have lowest average redemption rate. We also performed t-test to see the significance of this finding.

Consider the highest and least redemption of the LGA. Yewa North = 94.16 and Obafemi Owode = 79.311 has a difference of 14.85 which is a high value. To check if this difference between the 2 LGA is significant or not, we performed a T-Test.

T-test:

To check if the analysis we did is significant or not we have performed T test which will give us p value through which we can find the significance of the analysis. The p value we got from T test using scipy.stats is 0.002017593823665442 which means the difference is statistically significant. Geographical differences like terrain and demographical difference like availability of commutation etc may be affecting redemption rate. The difference is statistically significant from which we can say that geography does impact the redemption. Here we did descriptive analysis of redemption rate with respect to distribution point. We found top 10 distribution point which have highest average redemption rate. We also found top bottom distribution point which have lowest average redemption rate. We also performed t-test to see the significance of this finding.

2.6 Analytics/Algorithms

We have implemented 2 things here:

1) Predicting the 100% redemption of bed nets by a household

2) Predicting the probability of each bed net to be redeemed.

Initially for data modeling, we built a regression model, mainly Linear Regression and Random Forest Regression. But both the machine learning models gave a low R2 square value due to which gave the conclusion that the data wasn’t suitable for regression analysis.

So, we took the approach of classification analysis which gave us a better conclusive analysis of the data and there were findings as well which would be beneficial for the ORGANIZATION team.

Since we need to find the redemption of each household, the output is going to be a binary classifier value. Hence, we would be performing Classification on the dataset. And the algorithms we have chosen to perform classification are Logistic Regression, Random forest and XG Boost.

Before we proceed with modelling, we have done a logistic regression to estimate the relationship between the predictors and the response variable. This will tell us not only if they are significantly correlated but also how much the expected likelihood of redemption changes as the predictors change.

- P value is used to check the significance of the predictors. Variables with p value 0.05, can be significant. As we can see from the screenshot above, all the variables have a p value less than 0.05, which means they have significant impact on the probability of getting 100% redemption and hence we are using all the features for the modelling.

- From the Estimates, we can interpret as follows. For one unit(mile) rise in distance, the log (odds of redemption) decreases by 0.13 times.

- For categorical variables here, Friday is taken as the base data for comparision. It can be interpreted as, the log (odds of redemption) is higher on Monday by 0.244 compared to Friday.

- As we know, the coefficients are on the log (odds) scale. we can do margins to find the actual value of estimates.

- As distance increases by 1 mile, the redemption decreases by 3%

- Average mins per HH – highly significant but the magnitude has less effect on the redemption

- Size_of_hh : with every 1 additional member is the family, the redemption increases by 2.15%

We consider base as Friday to compare the logistic results.

- Probability of Redemption on Monday is 6.04% higher than probability of redeeming on Friday

- Probability of Redemption on Tuesday is 4.6% higher than probability of redeeming on Friday

- Probability of Redemption on Wednesday is 6.3% higher than probability of redeeming on Friday

- Probability of Redemption on Thursday is 7.6% higher than probability of redeeming on Friday

- Probability of Redemption on Saturday is 5.8% higher than probability of redeeming on Friday

- Probability of Redemption on Saturday is 2.2% higher than probability of redeeming on Friday

We also did a Logistic Regression which predicts the probability of each bed net to be redeemed.

Number of redeemed bed nets is in fact a count variable (a kind of discrete variable). Also, the number of redeemed bed nets can be assumed to follow by Binomial distribution (n,p(x)), where n is the total number of bed nets and p(x) is the probability of redeem a bed net given the independent variable x (you can also treat it as the redemption rate given x). Hence, the logistic regression can be applied for the count data. And it can help predict p(x).

Below is the R code example in doing the logistic regression for the count data:

- If we look at the p value, the insignificant variable is Tuesday.

- From the results we can say that, for increase in 1 mile, log (odds) of redeeming a bed net is decreased by 0.15 units.

- For categorical variables here, Friday is taken as the base data for comparision. It can be interpreted as, the log (odds) of redeeming a bed net is higher on Monday by 0.061 compared to Friday.

Machine Learning Model:

We have done modelling for Predicting the 100% redemption by a household

For the machine learning models, we have used 70% of the data for the training purposes and the remaining 30% of the data for the test purposes. Also, when performing the binary classification, we noticed that for the output variable the class is not balanced, so we performed the oversampling (smote analysis) which helped the balancing the output variable net_redemption. This gave us a more unbiased and realistic output.

For the better fit of the model, we can square few columns like distanceFromDPinMiles, average_minutes_per_hh, size_of_hh.

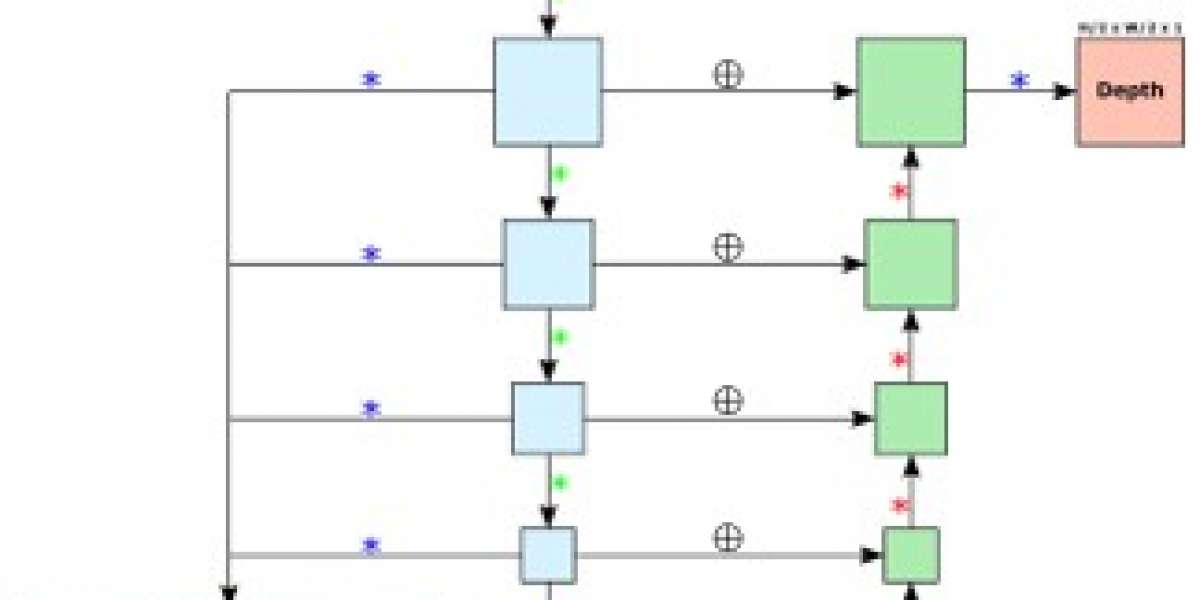

Fig. We have used these columns for the model, we did some feature engineering and have created some columns with the square of few columns, making it a polynomial model.

Logistic Regression:

Logistic Regression is generally used to implement classification on the dataset, when the dependent variable is categorical. There is a need for setting up a threshold based on which classification can be done. For example, in order to predict whether a person will collect the bed-net/not in the project, Logistic Regression sets up a threshold value, so that it can classify depending upon the threshold value 0 or 1, and the hypothesis function used is z=w*(x+b), and also apply sigmoid function further. If z values are to infinity, it will be classified as 1 or if threshold is towards –infinity, then 0. The output of the hypothesis z, is a probability, and we would be using binary logistic regression since the we have 2 output variables in our problem statement (Swaminathan, n.d.). We mainly performed Logistic Regression to understand the significance of the variables. The Logistic Regression model gave us a test accuracy of 55%. From the AUC, we can conclude that AUC = 0.55 means that there is 55% chance that the model will be able to distinguish between the classes correctly. Higher the AUC value, better is the model.

Random Forest Classifier:

Random forest is a model which is based on regression trees which offers more flexible functional form. By using random forest, we can prevent overfitting and increase the predictive power of the algorithm. Random forest can be used for both classification and regression, but for our project we plan on using it for classification (Interpreting random forests, n.d.). Since the target variable is a categorical value (net_redemption), we perform classification on the model. We specifically chose Random forest, so that we can perform feature selection using random forest and one most important reason to choose the random forest is because of the high accuracy of the random forests and most importantly the high interpretability of the algorithm, since we are more considerate or responsible for the factors affecting the redemption (Blog, n.d.). The Random Forest classifier model gave us a test accuracy of 77.9%. We performed Random forest classification with 10-fold cross validation to avoid overfitting. This gave us a similar accuracy of 77.89%.

True Positive = 272392 True Negative = 253534

False Positive = 84029 False Negative = 65170

From the confusion matrix, we can say that 525926 rows are correctly classified. 149199 rows are incorrectly classified.

VARIABLE IMPORTANCE:

We have performed the Feature importance from the Random Forest model. Feature Importance gives the relative importance between the predictors. From the feature importance, we can say that distance is 4 times important than average_min_per_hh.

distanceFromDPinMiles_SQR(0.3721) is slightly important than the distanceFromDPinMiles(0.3708). The top important variables are distance, average mins per household and Size of the household.

XG Boost

Boost is mainly a decision tree-based algorithm which mainly resembles the machine learning algorithm.It resembles the machine learning algorithm. It is mainly used in predicting the problems which involves data which is unstructured and the Artificial neural network which mainly tends to better performance on all the other algorithms or the frameworks (Morde, n.d.). The accuracy of xgboost classifier on test data after we have performed smote is 62%. We also got precision, recall and f1 score which can be seen in Smote paragraph xgboost results. We also got the Auc Roc curve with Auc = 0.62.

From the AUC, we can conclude that AUC = 0.62 means that there is 62% chance that the model will be able to distinguish between the positive and negative class.

K-Fold Cross Validation

K-Fold Cross Validation is one of the techniques, where it tries to test the data on the validation part of the data, so as not to overfit the model after training the model. So, In order to make sure that the data doesn’t overfit, the dataset is broken down into number of pieces depending upon the K-Value, where K represents the number of splits, where K-1 pieces are for training the model and the last piece would be for testing the model on the trained data. We want to integrate this on our project to prevent overfit the model, so that we can have better results on the model. The results after performing K-Fold Cross Validation are as follows.We performed 10-fold cross validation on random forest to avoid over fitting. This gave us an accuracy of 77.89%

Smote on the dataset:

So, we also did SMOTE on the data. SMOTE produces synthetic rows from the existing rows. After SMOTE, the count of 1’s and 0’s is equal. Hence, we could say that there is no class balance in the data.We performed logistic regression and got accuracy of 54.88% on the test data.

Logistic Regression classification report after smote:

XGBoost Classification report after smote: 62%

Random Forest classification report after smote: 78%

Hyper Parameter Tuning, RandomSearchCV using Logistic Regression:

Hyperparameter tuning is a process where a set of parameters of that particular algorithm are used for training the data before the actual training process starts, they are set of optimal parameters for the algorithm so that they can perform at their best level and give some good performance results. We also integrated and performed a RandomSearchCV hyperparameter on this model to make sure that we set the right parameters by using this function so that our algorithms produce us a higher accuracy. The major advantage of this method is less processing time compared to others. (Boyle, n.d.). The Hyper Parameters results are as here as directed. We used the variables that we got from Hyper parameter tuning into our model.

Fig : The best parameters obtained from Hyper parameter tuning for Logistic Regression.

We got a accuracy of 56% using the best parameters generated by the RandomSearchCV for Logisitic regression.We also did Hyperparameter tuning for Random forest model using RandomSearchCV. We have not seen any significant improvements, accuracy of the model stayed at 78%.

2.7 Metrics used to assert the results:

AUC: Area under the curve, it tells how much the model can distinguish the classes.The below screenshot is the AUC- ROC curve from the random forest model.From the AUC, we can conclude that AUC = 0.78 means that there is 78% chance that the model will be able to distinguish between the positive and negative class.

Precision: It is a metric of a classifier which does not label an instance positive which is negative (Classification Report, n.d.).

F1 Score: F1 score is the mean of precision and recall. The best f1 score is 1 whereas the worst is 0 (Classification Report, n.d.). From the random forest model, F1 score of the model is 0.78 on the test data.

Recall: It is an ability of classifier which finds all the positive instances (Classification Report, n.d.).

Support: Support is the total number of actual occurrences of class in the dataset. For training data, imbalanced support may indicate structural weaknesses in the scores of classifiers which can help to indicate the need of sampling or rebalancing (Classification Report, n.d.).

Model | Accuracy | Precision | Recall | F score | Support |

Logistic Regression | 55% | 0.55 | 0.55 | 0.55 | 675125 |

Random Forest | 77.9% | 0.78 | 0.78 | 0.78 | 675125 |

XGBoost | 62% | 0.62 | 0.62 | 0.78 | 675125 |

2.8 Visualization

Visualizations play a vital role in better understanding the data and derive insights from it. Some of the visualization we have done on the Nigeria data are as follows.

The distribution of the data is shown using the histogram. The variables are average_minutes_per_hh, distanceFromDPinMiles, noOfCards, size_of_hh and new-redemption_rate.

new_redemption_rate has values 0 and 1. We can see there is a class imbalance in this column. There are more 1’s than 0’s.

noOfCards has 1, 2, 3 and 4 as values. Size of household shows that there are from 2 to 16 members.

Maximum redemption has occurred on weekends compared to weekdays. Sunday is the highest redemption.

This pairplot shows the distribution of all the variables with respect to the redemption rate (output variable). For noOfCards columns, there are more of number of 2 netcards assigned to the households.

From the graph below, we can say that household of 4 has the highest average redemption rate. And we can observe that the average redemption rate is increasing with the size of household.

The below graph shows us the number of people who are frequently staying in a household with respect to nets delivered and dp_commune_3 in From hovering on the graph, we can see that the number of people frequently staying in dp_commune_3 is 262579 with number of nets delivered being 3.

The below graph shows us the nets delivered with respect to dp_arrondissement_4 and females present in a household in Benin. If we check the plot for an example, we can see that in OUEDO arrondissement if there are 0 females in a household then 1753 nets were delivered, more analysis can be seen if we hover more around the graph.

- Challenges and Suggestions

3.1 Challenges Faced / Risks

I have faced quite a few challenges with the data.

- The first challenge i faced was when i performed correlation of the variables in the dataset. The values of correlation were low and there was no significance between the variables.

- After I performed the correlation, I got very less variables to build the model. These predictor variables were not that helpful to build a good model.

- The nature of the data was not suitable to build a regression model. Hence, I had to shift to classification model to get better results.

- Nigeria dataset was huge with 1.3 million rows and it took too much time to deal with. Modeling took 8 to 10 hours of time to build.

- I also had registration data of Benin, when comparing the variables between Benin and Nigeria I got to see that the data of Benin is rich whereas the Nigeria data had very few significant variables to count on.

3.2 Future Work

- The model built on Nigeria dataset can be very useful for the other country programs with the same objective. Example – Same project undergoing in Benin.

- Benin data needs to be preprocessed accordingly in order to be compatible to the model built for Nigeria.

- Some of the columns that need to be processed are – distance, average minutes per household, size of household, days of the distribution.

- The distance variable to be calculated using the latitude and longitudes of the distribution point and households.

- Infact, modelling on Benin data would give a better model because of its numerous significant variables.

3.3 Suggestions

I would suggest the organization to collect the data in field with common variables with respect to the data collected in other countries. This would make the process of running models very easy and efficient for better results. Also gather more information about the project participants like their age, income, education which would help in building machine learning model of the data and get conclusive results. During descriptive statistics of BENIN data, it was observed that Kids below age 5 were neglected for the net distribution. This should be taken care.

3.4 Lessons Learnt

- Statistics plays a very important role in Data Analytics Project. Be it transforming variables for a better fit of the model, right kind of model to choose for the data, interpretation of the modelling results, Statistics plays a huge part in dealing with the Data.

- The real time data is very un-organized, and I had to research an extra mile in order to make the data quality better, to get the better results.

- Major part of the project was preprocessing the data and analyzing it which gave us better model results.

- To start with the project, I had to do some background research on their similar previous projects called as MIRA.

- Conclusion

From the results I got from logistic regression, random forest and XGB, I can say that random forest gave the highest test accuracy of 78%. The model also gave similar accuracy of 77.89% using k-fold cross validation, which means there is no overfitting.From this I can conclude that, Random forest is the best model based on our Data. For logistic and XGB I got accuracy of 55% and 62% respectively.

Appendix:

- URL – The data has been obtained from the organization database, where it has been stored.

- Title (Type: string) – Registration of the beneficiaries

- Authors –This is a survey dataset, so the author cannot be determined.

References:

Blog, M. (n.d.). How to Interpret Regression Analysis Results: P-values and Coefficients. Retrieved from The Minitab Blog: https://blog.minitab.com/blog/adventures-in-statistics-2/how-to-interpret-regression-analysis-results-p-values-and-coefficients

Boyle, T. (n.d.). Hyperparameter Tuning. Retrieved from towardsdatascience: https://towardsdatascience.com/hyperparameter-tuning-c5619e7e6624

Datalab, A. (n.d.). What is the Variance Inflation Factor (VIF). Retrieved from Medium: https://medium.com/@analyttica/what-is-the-variance-inflation-factor-vif-d1dc12bb9cf5

Egrot, M., Houngnihin, R., Baxerres, C., Damien, G., Djènontin, A., Chandre, F., . . . Remoué, F. (2014). Reports of long-lasting insecticidal bed nets catching on fire: a threat to bed net users and to successful malaria control? Retrieved from NIH: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4119472/

Knippenberg, E., b, N. J., Constas, M. (2019). Quantifying household resilience with high frequency data: Temporal dynamics and methodological options. ELSEVIER, 1-15.

Morde, V. (n.d.). XGBoost Algorithm: Long May She Reign! Retrieved from towardsdatascience: https://towardsdatascience.com/https-medium-com-vishalmorde-xgboost-algorithm-long-she-may-rein-edd9f99be63d

Swaminathan, S. (n.d.). Logistic Regression — Detailed Overview. Retrieved from towardsdatascience: https://towardsdatascience.com/logistic-regression-detailed-overview-46c4da4303bc