Problem Statement:

The data was available to us in a json format. We found it quite difficult to convert the json file into a form of readable format. After converting the data into a readable format preprocessing was needed. Data preprocessing was required for the model to learn and derive important information from the data. For training and testing of the train set a quite efficient train - test split ratio need to be fixed. After trying out various models on the data to obtain the best accuracy we were faced with another challenge.The data was highly imbalanced among the 5 classes{0,1,2,3,4}, with 0 being the majority class with 6942 variables, 1 with 1506 variables, 2 with 617 variables, 3 with 553 variables, 4 with 410 variables. Machine learning classifiers fail to cope up with imbalanced training datasets as they are sensitive to various proportion of different classes. This led to various misleading accuracies.

Action:

Data Preprocessing

The data in the json file was initially converted into a dataframe to find out the number of predictor variables(106428) and the number of data points(12536). The dataframe was then converted into a sparse matrix. Data preprocessing was done using sparse matrix which helps in dealing with a large matrix with a lot of misssing data. They help in storing data efficiently and reduces memory which otherwise would have been very large. Python has many sparse matrices but we made use of COO matrix or coordinate sparse matrix in Scipy to create a coordinate format of our data.

Split Ratio

Intially a split ratio of 65:35 was chosen for the dataset but after applying various models like Support Vector Machines, Random Forest and Neural Networks it was found out that a split ratio of 80:20 provided better results.

Balancing of Data

In order to deal with the imbalanced dataset various techniques like stratified k-fold sampling, Near Miss and SMOTE algorithm was used. Synthetic Minority Over Sampling Technique(SMOTE) proved to be the best among them as it provided the best results. Each and every class now had equal number of values of 6492.

Results:

Initially a variety of models were tried out on the balanced data like Logistic Regression , Decision Trees and Random Forest and provided good accuracies but these were misleading. After applying SMOTE the accuracies dropped down. The close contenders after balancing were Support Vector Machines, Random Forest and MLP Classifier with MLP Classifier proving to be the best among them.

The parameters of the MLP model were manipulated until the best accuracy was obtained for the model.

Insights:

Many models used produced a variety of results , decision trees worked fairly with the non-linear nature of the data. The difference in the balanced and unbalanced accuracies were because of the zero inflated data set. Use of PCA and models like Random Forest, SVM and Neural Networks produced better results and Neural Networks were considered for modelling. The use of robust model and balancing techniques helped in achieving a true measure of the performance of our model and the choice of appropriate hyperparameters helped raise our balanced accuracies. This project helped us in understanding:

1)Handling of large data in a right way

2)Unbalanced dataset might lead to a belief that accuracy is the true estimator

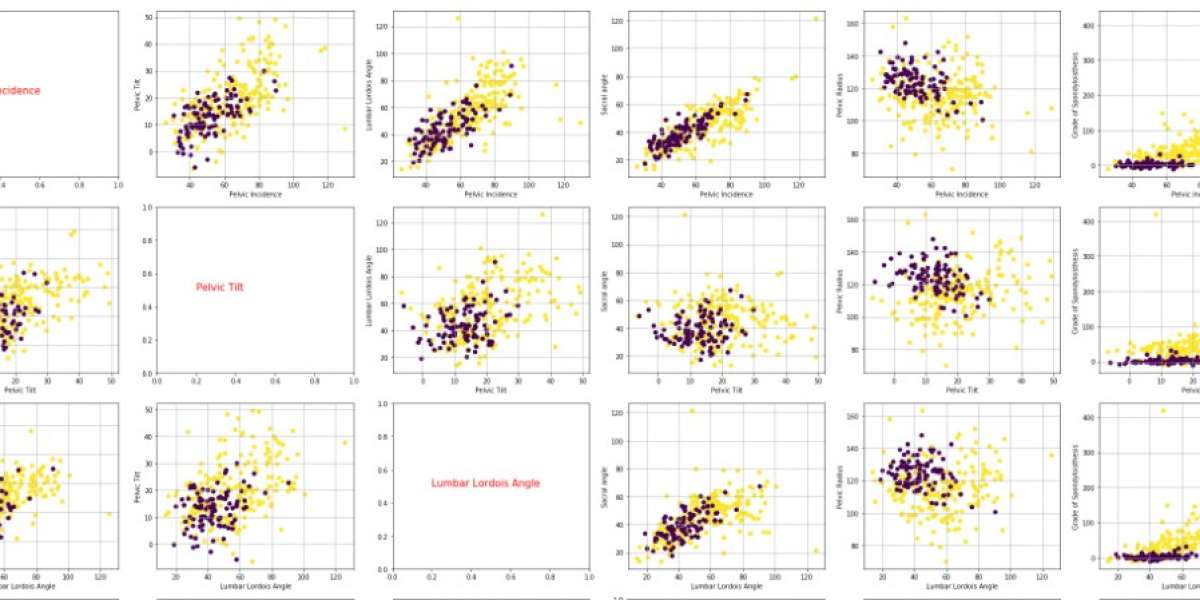

3)Understand the relation between the predictors and response variable

4)Estimation of right parametersis needed to improve the model

5)Testing- Training ratio is vital in determining accuracy